Cross-Validation Strategies for Robust Cancer Prediction Models: A Guide for Biomedical Researchers

This article provides a comprehensive guide to cross-validation strategies for developing and validating robust cancer prediction models.

Cross-Validation Strategies for Robust Cancer Prediction Models: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to cross-validation strategies for developing and validating robust cancer prediction models. Aimed at researchers, scientists, and drug development professionals, it covers foundational principles, methodological applications for various data types (including high-dimensional genomic and clinical data), advanced troubleshooting and optimization techniques, and rigorous comparative validation. The content synthesizes current research to offer actionable insights for mitigating overfitting, assessing model generalizability, and implementing best practices that ensure reliable and clinically translatable predictive models in oncology.

The Critical Role of Cross-Validation in Modern Cancer Prediction

Core Concepts and Definitions

In the development of cancer prediction models, validation is a critical step that ensures the model's findings are reliable and applicable to new patient populations, rather than being artifacts of the specific dataset used for development. Three interconnected concepts are fundamental to this process: overfitting, optimism bias, and generalizability.

Overfitting occurs when a model learns not only the underlying true relationships in the training data but also the random noise specific to that dataset. This is akin to a student memorizing specific exam questions rather than understanding the underlying principles, consequently performing poorly on new questions that test the same concepts. Overfitting is particularly prevalent in high-dimensional settings where the number of potential predictors (e.g., genomic markers) far exceeds the number of observations (patients). This excessive model complexity leads to excellent performance on the training data but poor performance on new, unseen data [1].

Optimism Bias is the direct consequence of overfitting. It refers to the systematic overestimation of a model's predictive performance when evaluated on the same data used for its development. The model's performance appears optimistically good because it has already "seen" this data. The bias is quantified as the difference between the performance on the training data and the expected performance on new, independent data [2]. Mitigating this bias is a primary goal of robust internal validation techniques.

Generalizability (or external validity) describes a model's ability to maintain its predictive accuracy when applied to data from different sources, such as patients from a different geographic region, hospital, or time period. It is the ultimate test of a model's clinical utility. A model that cannot generalize may lead to inaccurate predictions and potentially harmful clinical decisions when implemented in practice [3].

Internal Validation Strategies

Internal validation techniques use the available dataset to estimate and correct for the optimism bias inherent in a newly developed model. The table below summarizes the common strategies, their methodologies, and relative performance based on a simulation study in high-dimensional settings.

| Validation Method | Key Implementation Steps | Stability & Performance Findings (from simulation [4]) |

|---|---|---|

| Train-Test Split | Dataset is randomly split into a single training set (e.g., 70%) and a single test set (e.g., 30%). The model is built on the training set and evaluated on the held-out test set. | Performance was found to be unstable, heavily dependent on a single, arbitrary data split. |

| Bootstrap Validation | Multiple random samples are drawn with replacement from the full dataset to create many bootstrap training sets. Models are built on each and tested on the non-sampled data. | The conventional bootstrap was over-optimistic. The 0.632+ bootstrap variant was overly pessimistic, especially with small sample sizes (n=50 to n=100). |

| K-Fold Cross-Validation | The dataset is partitioned into K equally sized folds (e.g., K=5 or 10). Iteratively, K-1 folds are used for training and the remaining fold is used for validation. This process is repeated K times. | Demonstrated greater stability and is recommended for internal validation of high-dimensional models, particularly with sufficient sample sizes. |

| Nested Cross-Validation | A two-layer procedure. The inner loop performs cross-validation on the training set to tune model parameters (e.g., hyperparameters), while the outer loop provides an almost unbiased performance estimate. | Performance was robust but showed some fluctuations depending on the regularization method used for model development. |

Experimental Protocol for K-Fold Cross-Validation

A commonly used and robust internal validation method is K-Fold Cross-Validation. The following protocol, as applied in a study classifying five cancer types from DNA sequences, details its implementation [5]:

- Data Partitioning: The entire dataset is first divided into a training set and a completely independent hold-out test set (e.g., 80%/20% split). The test set is set aside and not used in any model building or tuning until the final evaluation.

- Stratification: To ensure each fold is representative of the overall class distribution, the training set is partitioned into K folds (typically K=5 or 10) using a stratified sampling approach. This preserves the percentage of samples for each cancer class in every fold.

- Iterative Training and Validation: The following process is repeated K times:

- Training Phase: For each iteration

i(whereiranges from 1 to K), folds 1 through K, excluding foldi, are combined to form a new training subset. - Model Fitting & Tuning: A model is fitted on this training subset. If hyperparameter tuning is required, it is performed within this training subset using a second, inner layer of cross-validation to avoid data leakage.

- Validation Phase: The tuned model is used to predict the outcomes for the data in the held-out fold

i. The performance metrics (e.g., AUC, accuracy) from this prediction are recorded.

- Training Phase: For each iteration

- Performance Aggregation: After K iterations, each data point in the training set has been used exactly once for validation. The K performance estimates are then aggregated (e.g., by averaging) to produce a single, robust estimate of the model's predictive performance, which accounts for optimism bias.

External Validation and Risk of Bias Assessment

While internal validation estimates optimism, external validation is the process of evaluating a model's performance on data that was completely independent of the development process, often collected from different locations or time periods [3]. It is the gold standard for assessing a model's real-world generalizability. For instance, a recent large-scale study developed cancer diagnosis algorithms on a population of 7.46 million patients in England and validated them on two separate cohorts totaling over 5.3 million patients from across the UK, demonstrating superior performance compared to existing models [3].

To systematically evaluate the methodological quality of prediction model studies, the Prediction model Risk Of Bias ASsessment Tool (PROBAST) was developed. This tool is critical for researchers and clinicians to judge the trustworthiness of a published model. PROBAST assesses four domains [6] [7]:

- Participants: Were the participants and the data source appropriate for the research question and representative of the target population?

- Predictors: Were the predictors defined, assessed, and measured in a similar way for all participants?

- Outcome: Was the outcome of interest defined and determined in a robust and consistent manner?

- Analysis: This is the most critical domain. It evaluates issues like sample size, handling of continuous predictors, and most importantly, whether the model was validated appropriately and whether the validation accounted for overfitting and optimism bias.

A systematic review using PROBAST to assess prognostic models in oncology developed with machine learning found that a staggering 84% of developed models were at a high risk of bias, with the "analysis" domain being the largest contributor [7]. Common flaws included insufficient sample size and the use of simple data-splitting without other internal validation techniques, leading to overoptimistic results.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological "reagents" and their functions in the validation of cancer prediction models.

| Research Reagent (Method/Technique) | Primary Function in Validation |

|---|---|

| PROBAST (Prediction model Risk Of Bias ASsessment Tool) | A structured tool to critically appraise a prediction model study for potential methodological shortcomings and risk of bias across participants, predictors, outcome, and analysis domains [6] [7]. |

| Regularization (e.g., Lasso, Ridge) | A statistical technique used during model fitting to reduce model complexity and prevent overfitting by penalizing the magnitude of model coefficients [1]. |

| Bootstrap Resampling | A statistical method that involves repeatedly sampling with replacement from the original dataset. It is used to estimate the distribution of a statistic (e.g., model optimism) and correct for it [4] [2]. |

| Shrinkage | A post-development correction factor applied to model coefficients to make the model's predictions less extreme (more conservative), thereby improving generalizability [2]. |

| Nomogram | A graphical calculating device that provides a visual representation of a multivariate statistical model, enabling clinicians to easily compute an individual patient's predicted probability of an outcome [8]. |

| Grid Search | A hyperparameter optimization technique that systematically works through a manually specified subset of the hyperparameter space to find the combination that yields the best model performance, typically evaluated via cross-validation [5]. |

The Critical Need for Rigorous Validation in High-Dimensional Oncology Data

Modern oncology research increasingly relies on high-dimensional data, where the number of features (such as genomic or transcriptomic variables) vastly exceeds the number of patient samples. While predictive models built from this data hold tremendous promise for personalized cancer care, they are particularly vulnerable to overfitting and optimism bias, where performance estimates on training data are unrealistically high compared to true performance on independent data. This challenge is especially acute with time-to-event endpoints like survival or disease recurrence, where right-censoring adds further complexity [9]. Consequently, rigorous internal validation is not merely a statistical formality but a critical prerequisite for developing reliable models that can genuinely inform clinical decision-making and drug development pipelines.

This guide provides an objective comparison of common internal validation strategies for high-dimensional oncology data, framing them within the broader thesis that cross-validation strategy selection directly impacts performance estimation accuracy and future model utility.

Comparative Analysis of Internal Validation Strategies

A recent simulation study provides a direct benchmark of internal validation methods in a high-dimensional time-to-event setting, typical in oncology. The study simulated datasets inspired by a real-world head and neck cancer cohort, incorporating clinical variables and 15,000 transcriptomic features with realistic distributions [9]. The performance of Cox penalized regression models was assessed using various validation methods, measuring discrimination (time-dependent AUC and C-index) and calibration (3-year integrated Brier Score) across sample sizes from 50 to 1000 [9].

Table 1: Comparison of Internal Validation Method Performance in High-Dimensional Settings

| Validation Method | Key Principle | Stability with Small Samples (n=50-100) | Performance with Larger Samples (n=500-1000) | Risk of Optimism Bias | Recommended Use Case |

|---|---|---|---|---|---|

| Train-Test Split (70:30) | Single split into training and testing sets | Unstable performance | More stable but inefficient data use | Moderate | Preliminary exploration only |

| Conventional Bootstrap | Repeated sampling with replacement | Over-optimistic | Over-optimistic | High | Not recommended |

| 0.632+ Bootstrap | Weighted combination of apparent and bootstrap error | Overly pessimistic | Improves but can remain pessimistic | Low (pessimistic) | Specific scenarios requiring bias correction |

| K-Fold Cross-Validation | Data split into K folds; each fold used once for testing | Good stability | High stability and reliability | Low | Recommended for most scenarios |

| Nested Cross-Validation | Outer loop for performance estimation; inner loop for model selection | Good stability | High stability, but can fluctuate with regularization | Very Low | Recommended when hyperparameter tuning is needed |

The data reveals that k-fold cross-validation and nested cross-validation are the most reliable strategies, offering a superior balance between bias reduction and stability, especially when sample sizes are sufficient [9]. In contrast, simpler methods like train-test splitting or conventional bootstrap resampling demonstrate significant limitations for high-dimensional prognostic models.

Detailed Experimental Protocols and Methodologies

Simulation Framework for Benchmarking

The foundational study for this comparison employed a rigorous simulation protocol to ensure biologically and clinically relevant findings [9]:

- Data Generation Mechanism: Clinical variables (age, sex, HPV status, TNM staging) were simulated based on distributions from the SCANDARE head and neck cohort (NCT03017573). Transcriptomic data for 15,000 transcripts were generated using a four-step process that replicated the mean expression, dispersion, and skewed distribution of real RNA-seq data [9].

- Time-to-Event Simulation: Individual disease-free survival times were generated using an inverted cumulative hazard method. The model incorporated coefficients for clinical variables estimated from the real cohort and assumed 200 of the 15,000 transcripts were truly associated with recurrence risk, with coefficients drawn from uniform distributions [9].

- Experimental Replicates: For each sample size scenario (50, 75, 100, 500, 1000), 100 fully independent dataset replicates were generated and analyzed to ensure robust performance estimates [9].

Model Training and Validation Workflow

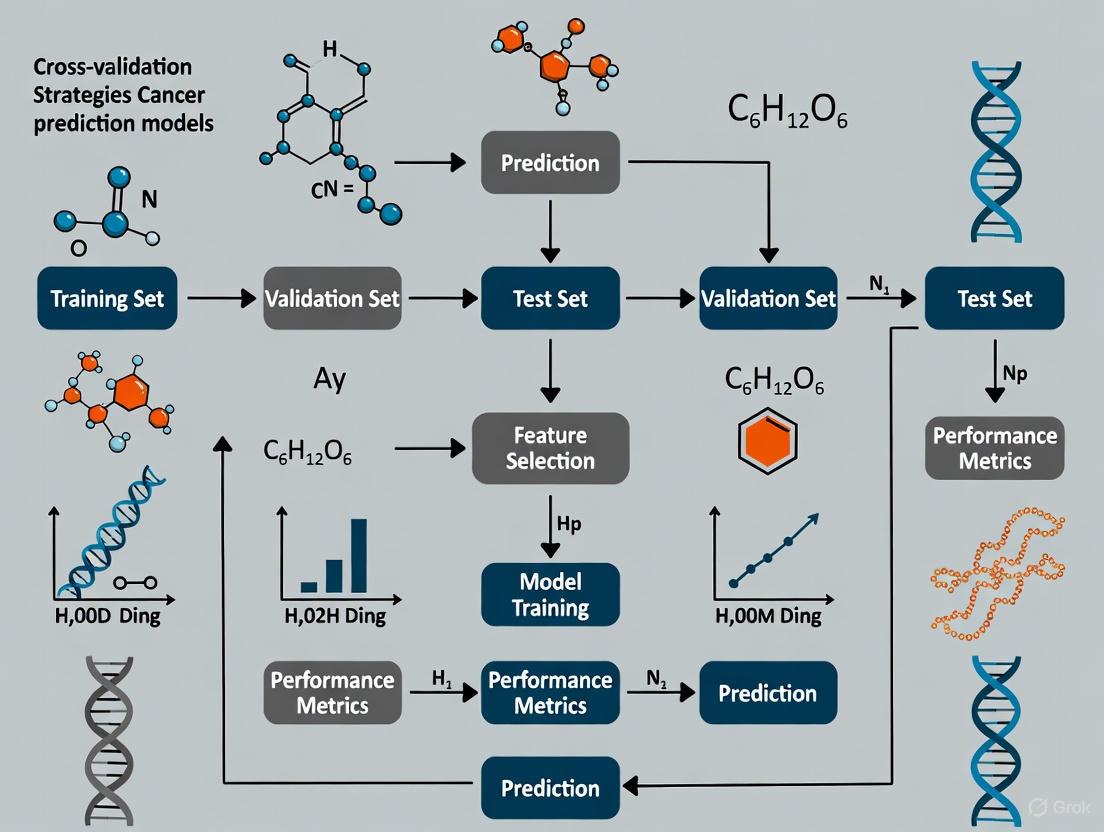

The following diagram illustrates the core experimental workflow for training and validating a high-dimensional Cox regression model, as implemented in the benchmark study.

Performance Evaluation Metrics

The benchmark study evaluated model performance using metrics critical for time-to-event data [9]:

- Discrimination: Ability to separate patients with different event times.

- Time-dependent AUC and Harrell's C-index were used. The C-index is a generalization of AUC for censored data.

- Calibration: Agreement between predicted and observed event probabilities.

- Integrated Brier Score (IBS) was used, where a lower score indicates better calibration, especially for 3-year disease-free survival.

Building and validating robust prediction models requires a suite of methodological tools and software resources.

Table 2: Essential Research Toolkit for High-Dimensional Model Validation

| Category | Tool/Reagent | Primary Function | Application Notes |

|---|---|---|---|

| Statistical Methods | Cox Proportional Hazards Model | Models relationship between features and survival time | Foundation for time-to-event analysis [9] [10] |

| Penalized Regression (LASSO, Elastic Net) | Performs variable selection and regularization in high-dimensional settings (p >> n) | Prevents overfitting; improves model sparsity [9] [10] | |

| Validation Algorithms | K-Fold Cross-Validation | Robustly estimates model performance by partitioning data into K subsets | Recommended for stability; balances bias and variance [9] |

| Nested Cross-Validation | Provides unbiased performance estimation when also tuning hyperparameters | Essential for complex model selection [9] | |

| Software & Platforms | R Statistical Software | Open-source environment for statistical computing and graphics | Primary platform used in benchmark study (version 4.4.0) [9] |

| Python with Scikit-Survival | Machine learning library with specialized survival analysis capabilities | Alternative for implementing similar validation workflows | |

| Data Resources | Nationwide Claim Cohorts (e.g., NHIS) | Large-scale, structured data for model development and validation | Enables development of practical, patient-level prediction models [11] |

The empirical evidence clearly demonstrates that the choice of internal validation strategy is not neutral; it fundamentally shapes the perceived and actual performance of high-dimensional oncology prediction models. While k-fold and nested cross-validation currently represent the most reliable approaches, the field continues to evolve. Future research directions include the development of more sophisticated dynamic prediction models that incorporate longitudinal biomarker data to update risk assessments in real-time [10], and the integration of multimodal deep learning frameworks that can effectively combine diverse data types such as clinical, genomic, and imaging data [12]. For researchers and drug developers, prioritizing rigorous validation is a critical investment, ensuring that predictive models translate into genuine clinical utility and advance the frontier of personalized oncology.

In the field of cancer research, the development of robust predictive models using high-dimensional data such as genomics, transcriptomics, and medical imaging has become increasingly prevalent. Internal validation of these models is a critical step to mitigate optimism bias and ensure reliable performance estimates before proceeding to external validation [9]. For researchers, scientists, and drug development professionals, selecting an appropriate validation strategy is paramount, as it directly impacts model generalizability and potential clinical utility. The complex nature of cancer data—often characterized by high dimensionality, limited samples, class imbalance, and correlated features—presents unique challenges that necessitate careful consideration of validation methodologies [13] [9].

This guide provides a comprehensive comparison of common internal validation strategies, with a specific focus on their application in cancer prediction models. We will examine the performance characteristics, implementation requirements, and appropriate use cases for each method, supported by experimental data from recent cancer studies. Understanding these strategies will enable more rigorous model development and more accurate assessment of true predictive performance in oncological applications.

Core Internal Validation Methods

Fundamental Validation Approaches

Internal validation strategies exist on a spectrum from simple holdout methods to sophisticated resampling techniques, each with distinct advantages and limitations in the context of cancer prediction research.

Train-Test Split (also called holdout validation) involves randomly partitioning the available data into separate training and testing sets, typically using a 70-80% portion for model development and the remaining 20-30% for performance evaluation [13] [14]. While computationally efficient and conceptually straightforward, this approach can yield unstable performance estimates, particularly with smaller datasets commonly encountered in cancer studies [9] [15]. For instance, in a mammography radiomics study predicting upstaging of ductal carcinoma in situ, models built from different training sets showed considerable variation, with AUC performances ranging from 0.59-0.70 on training and 0.59-0.73 on test sets across different data splits [15].

K-Fold Cross-Validation addresses some limitations of simple train-test splitting by partitioning the entire dataset into k roughly equal-sized folds (typically k=5 or 10) [14]. The model is trained on k-1 folds and validated on the remaining fold, repeating this process k times with each fold serving as the validation set once [5]. The final performance estimate is calculated as the average across all k iterations. This approach provides more stable performance estimates than single train-test splits and utilizes data more efficiently, making it particularly valuable for smaller cancer datasets [9] [14]. In a study classifying five cancer types using RNA-seq data, 5-fold cross-validation demonstrated excellent stability and achieved a classification accuracy of 99.87% with Support Vector Machines [13].

Stratified K-Fold Cross-Validation is a variant that preserves the class distribution proportions in each fold, which is especially important for cancer datasets with imbalanced outcomes [14]. For example, in a breast cancer classification study, stratified shuffle split cross-validation helped maintain consistent class ratios across splits, contributing to more reliable performance estimation [16].

Nested Cross-Validation employs two levels of cross-validation: an inner loop for hyperparameter tuning and model selection, and an outer loop for performance estimation [9] [4]. This strict separation between model selection and evaluation provides nearly unbiased performance estimates but requires substantial computational resources [14]. In high-dimensional prognosis models for head and neck cancer, nested cross-validation demonstrated good performance, though with some fluctuations depending on the regularization method used for model development [9].

Bootstrap Methods involve repeatedly sampling from the dataset with replacement to create multiple training sets, with the out-of-bag samples used for validation [9]. The standard bootstrap approach tends to be over-optimistic, while the corrected 0.632+ bootstrap method can be overly pessimistic, particularly with small sample sizes (n=50 to n=100) common in cancer studies [9].

Comparative Analysis of Validation Strategies

The table below summarizes the key characteristics, advantages, and limitations of each primary validation method in the context of cancer prediction research:

Table 1: Comparison of Internal Validation Strategies for Cancer Prediction Models

| Validation Method | Key Characteristics | Optimal Use Cases in Cancer Research | Advantages | Limitations |

|---|---|---|---|---|

| Train-Test Split | Single random partition (typically 70/30 or 80/20) | Preliminary model screening with large datasets (>1000 samples) [15] | Computationally efficient; simple implementation | High variance with small datasets; unstable performance estimates [9] [15] |

| K-Fold Cross-Validation | Data divided into k folds; each fold used once as validation | Small to moderate-sized cancer datasets; stable performance estimation [13] [9] | More stable than train-test; efficient data utilization | Can be computationally intensive with large k; requires careful fold creation |

| Stratified K-Fold CV | Preserves class distribution in each fold | Imbalanced cancer outcomes (e.g., rare cancer types) [16] [14] | More reliable for imbalanced data; reduces bias | More complex implementation; requires class labels during fold creation |

| Nested Cross-Validation | Inner loop for model selection; outer for evaluation | High-dimensional settings with hyperparameter tuning [9] [4] | Nearly unbiased performance estimates | Computationally expensive; complex implementation |

| Bootstrap | Multiple samples with replacement; out-of-bag validation | Small datasets where data efficiency is critical [9] | Good statistical properties; confidence intervals | Can be over-optimistic (standard) or pessimistic (0.632+) [9] |

Performance Comparison in Cancer Research Applications

Experimental Evidence from Cancer Studies

Recent research provides compelling experimental data on the performance characteristics of different validation strategies when applied to cancer prediction tasks:

Table 2: Performance Comparison of Validation Methods in Cancer Prediction Studies

| Study Context | Validation Methods Compared | Key Performance Findings | Sample Size | Data Type |

|---|---|---|---|---|

| Head and neck cancer prognosis [9] [4] | Train-test, bootstrap, k-fold CV, nested CV | K-fold and nested CV showed improved stability with larger samples; train-test was unstable; bootstrap was over-optimistic | 50-1000 (simulated) | Transcriptomic (15,000 features) + clinical |

| Breast cancer classification [13] | 70/30 train-test vs. 5-fold cross-validation | SVM achieved 99.87% accuracy with 5-fold CV vs. 96.3% with train-test split | 801 samples | RNA-seq (20,531 genes) |

| Multiple cancer type classification [5] | 10-fold cross-validation with independent test set | 100% accuracy for BRCA1, KIRC, COAD; 98% for LUAD, PRAD with 10-fold CV | 390 patients | DNA sequencing |

| DCIS upstaging prediction [15] | Multiple train-test splits (40 iterations) | AUC varied considerably: training 0.58-0.70, testing 0.59-0.73 across different splits | 700 cases | Mammography radiomics |

| Breast cancer detection [17] | 10-fold cross-validation with multiple splits | Stacked model achieved 100% accuracy using selected optimal feature subsets | 569 patients | Clinical and genomic features |

The experimental evidence consistently demonstrates that cross-validation strategies generally provide more stable and reliable performance estimates compared to single train-test splits, particularly for the high-dimensional, limited-sample datasets common in cancer research [13] [9] [15]. For instance, in a transcriptomic analysis of head and neck tumors, k-fold cross-validation demonstrated greater stability than train-test or bootstrap approaches, especially with larger sample sizes [9]. Similarly, in a breast cancer classification study, models evaluated with 5-fold cross-validation showed approximately 3.5% higher accuracy compared to a simple 70/30 train-test split [13].

Impact of Dataset Characteristics on Validation Performance

The optimal choice of validation strategy depends heavily on dataset characteristics, particularly sample size and dimensionality:

Sample Size Considerations: With smaller sample sizes (n<100), k-fold cross-validation and nested cross-validation generally outperform alternatives, though performance estimates remain variable [9]. As sample size increases to n=500-1000, these methods demonstrate significantly improved stability [9] [15]. In a mammography radiomics study, cross-validation required samples of 500+ cases to yield representative performance estimates [15].

High-Dimensional Data Challenges: Cancer research frequently involves high-dimensional data where the number of features (genes, radiomic features) vastly exceeds the number of samples [13] [9]. In such settings, k-fold and nested cross-validation are recommended as they provide more reliable performance estimates for Cox penalized models [9]. For example, in a study using RNA-seq data with 20,531 genes from 801 samples, 5-fold cross-validation provided stable performance estimates for identifying significant cancer genes [13].

Class Imbalance Issues: Many cancer outcomes exhibit natural imbalance (e.g., rare cancer types, low event rates) [14]. In these scenarios, stratified cross-validation approaches that preserve class distribution across folds are essential to avoid biased performance estimates [16] [14].

Implementation Guidelines for Cancer Research

Methodological Protocols

Based on experimental evidence from recent cancer studies, below are detailed methodological protocols for implementing the most effective validation strategies:

Protocol for K-Fold Cross-Validation in Cancer Transcriptomics [13] [9]:

- Data Preparation: Standardize gene expression data (e.g., RNA-seq counts) using appropriate normalization methods. Check for missing values and outliers.

- Fold Creation: Partition data into k=5 or k=10 folds using stratified sampling based on cancer type or outcome to maintain class distribution.

- Iterative Training/Validation: For each fold iteration:

- Use k-1 folds for feature selection and model training

- Validate on the held-out fold

- Record performance metrics (accuracy, AUC, etc.)

- Performance Aggregation: Calculate mean and standard deviation of performance metrics across all folds.

- Final Model Training: Train the final model on the entire dataset using the optimal hyperparameters identified during cross-validation.

Protocol for Nested Cross-Validation with High-Dimensional Data [9] [4]:

- Outer Loop Setup: Divide data into k outer folds (typically k=5).

- Inner Loop Configuration: For each outer fold, implement an inner cross-validation (e.g., 5-fold) on the training portion.

- Hyperparameter Optimization: Use the inner loop to tune model hyperparameters via grid search or random search.

- Model Evaluation: Train a model with optimized hyperparameters on the inner training set and evaluate on the outer test fold.

- Performance Estimation: Aggregate performance across all outer test folds for an unbiased estimate.

Protocol for Train-Test Validation with Multiple Splits [15]:

- Multiple Iterations: Implement 40-50 random shuffles and splits of the data into training and test sets.

- Balanced Splitting: Maintain consistent outcome rates across training and test splits.

- Performance Distribution Analysis: Record performance metrics for each split and analyze the distribution.

- Stability Assessment: Evaluate the range and variance of performance across splits.

Workflow Visualization

The following diagram illustrates the logical relationship and workflow between different internal validation strategies, highlighting their interconnectedness and appropriate application contexts in cancer research:

This decision framework provides a systematic approach for cancer researchers to select appropriate validation strategies based on their specific dataset characteristics and modeling objectives.

Essential Research Reagent Solutions

The successful implementation of internal validation strategies in cancer prediction research requires specific computational tools and resources. The table below details essential "research reagent solutions" for conducting robust internal validation:

Table 3: Essential Research Reagents for Internal Validation in Cancer Prediction Studies

| Reagent Category | Specific Tools/Libraries | Function in Validation Pipeline | Example Applications in Cancer Research |

|---|---|---|---|

| Programming Environments | Python (scikit-learn, pandas, numpy) [13]; R [9] | Data preprocessing, model implementation, validation execution | RNA-seq analysis [13]; transcriptomic simulation [9] |

| Validation Implementations | scikit-learn crossvalscore, StratifiedKFold [13] [16]; custom nested CV scripts [9] | Automated k-fold, stratified CV, nested CV execution | Breast cancer classification [13] [16]; head and neck cancer prognosis [9] |

| High-Performance Computing | Cloud computing platforms; parallel processing frameworks | Handling computational demands of repeated model fitting | Large-scale transcriptomic analysis [9]; radiomic feature processing [15] |

| Specialized Cancer Datasets | TCGA RNA-seq data [13]; CuMiDa brain cancer expression [13]; MIMIC-III [14] | Benchmark datasets for method development and comparison | Pan-cancer classification [13]; mortality prediction [14] |

| Model Interpretation Tools | SHAP [5]; LIME [17] | Post-validation model explanation and feature importance | DNA sequence classification [5]; breast cancer detection [17] |

These research reagents form the foundation for implementing robust internal validation protocols in cancer prediction studies. The selection of appropriate tools should align with the specific data modalities (genomic, clinical, imaging) and computational requirements of the research project.

Internal validation represents a critical methodological step in developing cancer prediction models that generalize to new patient populations. The experimental evidence and comparative analysis presented in this guide demonstrate that k-fold cross-validation and nested cross-validation generally provide more stable and reliable performance estimates compared to simple train-test splits or bootstrap methods, particularly for the high-dimensional, limited-sample datasets common in cancer research [13] [9].

The choice of optimal validation strategy depends on specific dataset characteristics, including sample size, dimensionality, class balance, and computational resources. For large sample sizes (n>1000), train-test splits may suffice for initial model screening, while small to moderate-sized datasets benefit substantially from k-fold cross-validation [9] [15]. In high-dimensional settings requiring extensive hyperparameter tuning, nested cross-validation provides the most unbiased performance estimates despite increased computational demands [9] [4].

As cancer prediction models continue to evolve in complexity and clinical relevance, employing rigorous internal validation strategies will remain essential for producing trustworthy, generalizable results that can potentially inform clinical decision-making and drug development pipelines.

In computational oncology, the reliable prediction of cancer risk, recurrence, and treatment response is paramount. The performance metrics of these predictive models—often celebrated in research publications—are not inherent properties of the algorithms themselves. Instead, they are profoundly influenced by the choice of validation strategy employed during benchmarking. Benchmarking, the process of evaluating model performance against standardized criteria or datasets to compare different models, serves as the foundation for selecting which models advance toward clinical application [18]. Within this process, the validation strategy—the method for assessing how well a model generalizes to unseen data—acts as a critical filter. It directly controls the reliability of performance metrics such as accuracy, AUC, and hazard ratios. For researchers, scientists, and drug development professionals, understanding this interaction is not merely academic; it is essential for making informed decisions about which models are truly robust enough to trust for preclinical and clinical decision-making.

This guide objectively compares how different validation approaches impact the reported performance of cancer prediction models. It synthesizes findings from empirical benchmarking studies and provides structured protocols to help the research community conduct more rigorous, reproducible, and clinically relevant model evaluations.

Core Principles of Model Evaluation and Benchmarking

Before examining the impact of validation, it is crucial to establish a common understanding of key evaluation concepts and the overarching goals of benchmarking.

Key Model Evaluation Metrics

The performance of predictive models is quantified using metrics that vary based on the task (e.g., classification vs. regression) [19] [20]. The table below summarizes common metrics used in cancer prediction research.

Table 1: Common Evaluation Metrics for Predictive Models

| Metric | Description | Use Case in Cancer Research |

|---|---|---|

| Accuracy | Proportion of total correct predictions [20] | Initial screening of classification models (e.g., cancer type) [5] |

| AUC-ROC | Measures model's ability to separate classes across all thresholds; independent of responder proportion [20] | Overall diagnostic performance (e.g., discriminating cancer vs. normal) [5] |

| Precision | Proportion of positive identifications that were actually correct [19] [20] | When cost of false alarms is high (e.g., recommending an invasive biopsy) |

| Recall/Sensitivity | Proportion of actual positives correctly identified [19] [20] | Critical for screening where missing a case is unacceptable (e.g., early detection) |

| F1-Score | Harmonic mean of precision and recall [20] | Balanced view when class distribution is imbalanced |

| Concordance Index (C-index) | Measures predictive accuracy for time-to-event data (survival analysis) | Assessing recurrence risk models [21] |

| Hazard Ratio (HR) | Ratio of hazard rates between risk groups in survival analysis | Quantifying the separation between high-risk and low-risk patient groups [21] |

The Purpose and Process of Model Benchmarking

Model benchmarking is a structured process for comparing the performance of different machine learning models against a set of standardized criteria or datasets [18]. Its primary purpose is to provide an objective evaluation to determine which model is best suited for a particular task, ensuring that the chosen model meets necessary performance standards before deployment [18]. In cancer research, this is vital for translating algorithms from academic exercises into tools that can genuinely impact patient care.

A robust benchmarking pipeline typically involves several key steps [18]:

- Selection of Benchmark Datasets: Choosing standard, well-characterized datasets that represent real-world inputs.

- Model Training & Evaluation: Training selected models on these datasets and evaluating them using predefined metrics (Table 1).

- Scalability & Efficiency Testing: Assessing computational performance, which is crucial for clinical applications.

- Comparison & Reporting: Systematically comparing results and documenting findings to provide clear recommendations.

Comparing Validation Strategies and Their Impact on Performance

The choice of validation strategy is one of the most consequential decisions in the benchmarking pipeline. Different methods introduce varying levels of bias and variance in performance estimates.

Common Validation Strategies

Table 2: Comparison of Common Model Validation Strategies

| Validation Method | Description | Advantages | Disadvantages & Impact on Performance |

|---|---|---|---|

| Holdout Validation | Dataset is split once into a single training set and a single test set [19]. | Simple and computationally efficient [19]. | High Variance in Metrics: A single, fortunate split can inflate performance. Performance is highly dependent on which samples end up in the test set, leading to unreliable estimates [14]. |

| K-Fold Cross-Validation | Dataset is split into k subsets (folds). The model is trained on k-1 folds and tested on the remaining fold, repeated k times [19] [5]. | More robust performance estimate; uses data more efficiently [19] [14]. | Can be computationally intensive. Subject-wise vs. Record-wise Splitting: In healthcare data, if records from the same patient are split across training and test sets, it can lead to over-optimistic performance due to data leakage [14]. |

| Stratified K-Fold Cross-Validation | A variant of K-Fold that preserves the percentage of samples for each class in every fold [14]. | Essential for imbalanced datasets; provides more reliable estimates for minority classes. | Similar computational cost to standard K-Fold. Mitigates bias in performance metrics that can occur if a random fold contains very few examples of a rare cancer type. |

| Nested Cross-Validation | Features an outer loop for performance estimation and an inner loop for hyperparameter tuning, preventing information leakage between tuning and evaluation [14]. | Considered the gold standard for unbiased performance estimation; reduces optimistic bias [14]. | High Computational Cost. Provides a realistic estimate of how the model will perform on unseen data, often resulting in lower but more trustworthy metrics compared to a single holdout set. |

| External Validation | A model developed on one dataset is tested on a completely independent dataset from a different source or institution [21]. | The strongest test of generalizability; simulates real-world deployment. | Often reveals a significant drop in performance ("performance decay") compared to internal validation, highlighting overfitting to the development dataset's specifics [21]. |

Empirical Evidence: How Validation Choice Affects Metrics in Cancer Research

The theoretical impact of validation strategies is borne out in real-world cancer modeling studies.

Case Study 1: DNA-Based Cancer Classifier. A study developing a high-accuracy DNA-based classifier for five cancer types reported impressive accuracies of up to 100% for some cancer types [5]. However, a closer look at the methodology reveals that these metrics were derived from a 10-fold cross-validation setup on a single cohort of 390 patients [5]. While more robust than a simple holdout, this approach still represents an internal validation. The performance metrics (100% accuracy, AUC of 0.99) are likely optimistic estimates of how this model would perform on DNA data from a different population or sequencing center. Without external validation, the true generalizability of these stellar metrics remains unknown.

Case Study 2: AI for Lung Cancer Recurrence. In stark contrast, a study on an AI model for predicting recurrence in early-stage lung cancer explicitly included external validation [21]. The model was developed on data from the U.S. National Lung Screening Trial (NLST) and then validated on a completely external cohort from the North Estonia Medical Centre (NEMC). The results clearly demonstrate the validation choice's impact: while the model showed strong performance in internal validation (Hazard Ratio for stage I disease: 1.71), its performance was even more pronounced in the external set (HR: 3.34) [21]. This case shows that a rigorous, external validation strategy can not only validate performance but can also strengthen the evidence for a model's utility, providing much greater confidence in its real-world applicability.

The following workflow diagram illustrates how different validation strategies are integrated into a model benchmarking pipeline and how they influence the final performance assessment.

Figure 1: Workflow of validation strategy impact within a benchmarking pipeline. The choice of validation method directly dictates the generated performance metrics and ultimately determines their reliability.

Experimental Protocols for Rigorous Benchmarking

To ensure fair and informative comparisons, benchmarking studies must follow detailed, rigorous experimental protocols.

Protocol 1: Benchmarking with Internal-External Validation

This protocol, inspired by multi-site data studies, provides a robust framework for assessing generalizability when full external validation is not yet possible [14].

- Data Acquisition and Curation: Collect datasets from multiple independent sources (e.g., different hospitals, clinical trials). In a study on a lung cancer AI model, researchers used data from the U.S. National Lung Screening Trial (NLST), North Estonia Medical Centre (NEMC), and the Stanford NSCLC Radiogenomics database [21]. All data must be consistently curated to align clinical metadata and outcomes.

- Site Rotation for Validation: Designate one site's data as the temporary external test set. Pool the remaining sites' data for model training and development.

- Model Development and Tuning: On the pooled training data, perform model training and hyperparameter tuning using an internal method like nested cross-validation to prevent overfitting [14].

- Internal-External Testing: Apply the fully-trained model from Step 3 to the held-out test set from the single site in Step 2. Record all performance metrics.

- Iteration and Meta-Analysis: Repeat Steps 2-4, rotating the held-out test set through each available data site. Finally, aggregate and meta-analyze the performance metrics across all iterations to get a final estimate of out-of-sample performance.

Protocol 2: Large-Scale Benchmarking Tournament

For comprehensively comparing many models, a "tournament" approach, as used in travel demand modeling, can be adapted for cancer informatics [22]. This is suitable for fields with many competing algorithms, such as radiomic feature analysis or genomic biomarker discovery.

- Define the Tournament Arena: Specify the precise prediction task (e.g., recurrence risk within 24 months), the evaluation metrics (e.g., C-index, AUC), and the benchmark datasets.

- Select Competitors: Include a wide range of models, from traditional statistical methods (e.g., Cox regression, logistic regression [23]) to modern machine learning and deep learning models [22]. Ensure all models are evaluated on the same data splits.

- Run Paired Experiments: For each model and dataset combination, run multiple paired experiments using a consistent validation strategy (e.g., repeated k-fold cross-validation) to account for randomness.

- Statistical Comparison: Use a formal statistical model (e.g., a pairwise comparison model) to analyze the results. The goal is to estimate the intrinsic predictive value of each model while controlling for contextual factors like dataset and sample size [22].

- Report and Rank: Report model rankings based on statistical significance, not just point estimates of performance. A key output is to identify a set of top-performing models whose differences are not statistically significant, acknowledging that the "best" model can depend on context.

The Scientist's Toolkit: Essential Reagents for Benchmarking Studies

Table 3: Essential Research Reagent Solutions for Computational Benchmarking

| Tool / Reagent | Function / Purpose | Example Use in Cancer Model Benchmarking |

|---|---|---|

| Standardized Benchmark Datasets | Provides a common ground for fair model comparison. | Publicly available datasets like The Cancer Genome Atlas (TCGA) or MIMIC-III (for critical care) [14] allow different models to be tested on identical data. |

| Stratified K-Fold Cross-Validator | Software function to split data into folds while preserving class distribution. | Prevents optimistic bias from random splits in imbalanced tasks (e.g., predicting a rare cancer subtype) by ensuring all folds have representative examples [14]. |

| Nested Cross-Validation Pipeline | A software script that automates the outer and inner loops of model training and tuning. | Crucial for obtaining unbiased performance estimates when comparing multiple models that require hyperparameter optimization [14]. |

| Radiomics/Feature Extraction Library | Standardized software to quantify medical images into mineable data. | Enables fair comparison of different AI models on the same set of extracted image features (e.g., for predicting lung cancer recurrence from CT scans) [21]. |

| Statistical Comparison Scripts | Code for formal statistical testing of model performance differences (e.g., t-tests, Wilcoxon signed-rank tests). | Moves beyond deterministic claims of "model A beat model B" to statistically sound conclusions about performance superiority in a benchmarking tournament [22]. |

The path to clinically viable cancer prediction models is paved with rigorous benchmarking. As this guide has demonstrated, the reported performance of any model is inextricably linked to the validation strategy used to assess it. A model boasting 100% accuracy under internal cross-validation [5] may see that number plummet upon external validation [21], while a model validated through a rigorous internal-external protocol provides a more trustworthy foundation for further development.

Therefore, the choice of validation is not a mere technicality; it is a fundamental aspect of scientific rigor in computational oncology. By adopting the more demanding practices of nested and external validation, and by embracing comprehensive benchmarking tournaments, the research community can generate more reliable evidence. This will accelerate the translation of truly robust models into tools that can ultimately improve drug discovery and patient outcomes.

In the pursuit of reliable cancer prediction models, researchers consistently face three formidable data challenges: small sample sizes, imbalanced classes, and censored survival data. These issues are not merely statistical nuisances but fundamental obstacles that can skew model performance, generate overly optimistic results, and ultimately limit clinical applicability. Within the broader thesis of cross-validation strategies for cancer prediction research, addressing these data challenges becomes paramount, as the choice of validation methodology is deeply intertwined with data quality and structure. The integration of sophisticated preprocessing techniques with appropriate validation frameworks forms the foundation upon which trustworthy predictive models are built, enabling more accurate stratification of cancer risk, recurrence, and patient survival.

This guide objectively compares contemporary methodologies designed to overcome these data limitations, presenting experimental data and protocols from recent research to inform selection criteria for researchers, scientists, and drug development professionals. By comparing the performance of various techniques on real-world cancer datasets, this analysis provides evidence-based guidance for advancing model robustness in oncological research.

Comparative Analysis of Solutions for Small Sample Sizes

Small sample sizes, particularly prevalent in genomic and rare cancer studies, increase the risk of model overfitting and reduce generalizability. Internal validation strategies become critically important in these high-dimensional, low-sample-size settings.

Internal Validation Strategies for Small Samples

A simulation study based on head and neck tumor transcriptomic data (N=76 patients) provides direct performance comparisons of various internal validation methods in high-dimensional settings. The study evaluated clinical variables and transcriptomic data with disease-free survival endpoints, testing methods across simulated sample sizes from 50 to 1000 patients [4].

Table 1: Performance Comparison of Internal Validation Methods for Small Sample Sizes

| Validation Method | Recommended Sample Size | Stability | Risk of Optimism | Discriminative Performance |

|---|---|---|---|---|

| Train-Test Split | Not recommended for n<500 | Unstable | High | Highly variable |

| Conventional Bootstrap | n=100-500 | Moderate | Overly optimistic | Inflated |

| 0.632+ Bootstrap | n>500 | Moderate | Overly pessimistic | Deflated |

| k-Fold Cross-Validation | n≥50 | High | Well-controlled | Reliable |

| Nested Cross-Validation | n≥75 | High | Well-controlled | Reliable |

Experimental Protocol: k-Fold Cross-Validation for High-Dimensional Data

Research classifying five cancer types (BRCA1, KIRC, COAD, LUAD, PRAD) from DNA sequences of 390 patients demonstrates an effective protocol for small sample sizes using k-fold cross-validation [5]:

- Dataset Partitioning: The entire cohort was divided into training (194 patients), validation (98 patients), and test (98 patients) sets

- Cross-Validation Setup: Implemented 10-fold cross-validation, where the dataset was partitioned into 10 distinct subsets

- Iterative Training: For each iteration, nine subsets (≈194 patients) were used for training, with one subset (98 patients) reserved for validation

- Model Aggregation: Predictions from all 10 validation sets were combined to generate final performance metrics

- Hyperparameter Tuning: Grid search was performed within each fold to optimize parameters without data leakage

This approach achieved remarkable accuracies of 100% for BRCA1, KIRC, and COAD, and 98% for LUAD and PRAD, demonstrating that robust validation can compensate for limited sample sizes [5].

Comparative Analysis of Solutions for Imbalanced Classes

Class imbalance presents a significant challenge in cancer prediction, where minority classes (e.g., malignant cases, rare cancer subtypes) are often the most clinically important. Multiple resampling strategies have been developed to address this issue with varying effectiveness.

Performance Comparison of Imbalance Handling Techniques

Research on colorectal cancer survival prediction using SEER data provides direct comparison of hybrid sampling methods on highly imbalanced datasets (1-year survival imbalance ratio 1:10) [24]. The study evaluated tree-based classifiers with various sampling approaches for 1-, 3-, and 5-year survival prediction.

Table 2: Performance Comparison of Sampling Methods for Imbalanced Colorectal Cancer Data

| Sampling Method | Classifier | 1-Year Sensitivity | 3-Year Sensitivity | 5-Year Sensitivity | Variance Reduction |

|---|---|---|---|---|---|

| None (Baseline) | LGBM | 58.20% | 72.45% | 60.15% | Baseline |

| SMOTE | LGBM | 68.50% | 78.30% | 61.80% | 45.2% |

| RENN | LGBM | 70.10% | 79.95% | 62.40% | 63.7% |

| SMOTE + RENN | LGBM | 72.30% | 80.81% | 63.03% | 88.8% |

| RE-SMOTEBoost | AdaBoost | 75.50%* | 82.30%* | 65.20%* | 88.8% |

Note: *Estimated performance based on reported improvements in original study [25]

Advanced Protocol: RE-SMOTEBoost for Imbalance and Overlap

The novel RE-SMOTEBoost method addresses both class imbalance and overlapping classes through a double-pruning approach [25]:

- Entropy-Based Pruning: Applies information entropy to identify and remove low-information majority class samples across the entire distribution, not just overlapping regions

- Roulette Wheel Selection: Uses Mahalanobis distance and roulette wheel selection to prioritize minority class instances with high information content for synthetic generation

- Boundary-Focused Generation: Generates synthetic samples near decision boundaries using SMOTE, guided by double regularization to prevent new overlapping samples

- Entropy Filtering: Implements a post-generation filter to remove low-quality synthetic samples while retaining informative ones

- Adaptive Boosting Integration: Incorporates the double pruning procedure into AdaBoost, leveraging adaptive reweighting to emphasize hard-to-classify samples

This approach demonstrated a 3.22% improvement in accuracy and 88.8% reduction in variance compared to the best-performing sampling methods [25].

Comparative Analysis of Solutions for Censored Data

Censoring presents unique challenges in cancer survival analysis, where the event of interest (recurrence, death) may not be observed for all patients during the study period. Different statistical approaches address fundamentally different clinical questions.

Analysis Methods for Censored Endpoints

Research on invasive breast cancer-free survival (IBCFS) highlights how different handling methods for second primary non-breast cancers (SPNBCs) – which are excluded from the IBCFS endpoint – address distinct clinical questions and yield different interpretations [26].

Table 3: Comparison of Statistical Approaches for Censored Cancer Endpoints

| Analytical Approach | Clinical Question Addressed | SPNBC Handling | Interpretation | Recommended Use |

|---|---|---|---|---|

| Ignore SPNBCs | Total treatment effect on IBCFS | Events are counted | Estimates overall treatment effect | Primary analysis for most trials |

| Censor SPNBCs | Hypothetical IBCFS risk had no SPNBCs occurred | Patients are censored at SPNBC occurrence | Estimates effect under hypothetical condition | Sensitivity analysis |

| Competing Risks | IBCFS risk while free from any SPNBC | Treated as competing events | Estimates cause-specific effect | When SPNBC risk is high |

External Validation Protocol for Survival Models

A machine learning model for early-stage lung cancer recurrence risk stratification demonstrates rigorous validation methodology for censored data [21]:

- Multi-Cohort Design: Incorporated data from 1,267 patients across U.S. National Lung Screening Trial (NLST), North Estonia Medical Centre (NEMC), and Stanford NSCLC Radiogenomics databases

- Temporal Validation: Used 1,015 patients for algorithm development, with 725 for internal validation and 252 from NEMC as external validation cohort

- Preoperative Focus: Trained survival model using preoperative CT radiomic features and clinical variables to predict recurrence likelihood

- Stratified Performance: Evaluated model using concordance index and disease-free survival across full cohort and within stage I patients specifically

- Pathologic Correlation: Assessed relationship between ML-derived risk scores and established pathologic risk factors using t-tests

The model demonstrated superior performance compared to conventional TNM staging, with hazard ratios of 3.34 versus 1.98 for stratifying stage I patients in external validation [21].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagent Solutions for Addressing Cancer Data Challenges

| Reagent/Solution | Primary Function | Application Context | Key Benefit |

|---|---|---|---|

| k-Fold Cross-Validation | Robust performance estimation with limited data | Small sample sizes, high-dimensional data | Minimizes overfitting, maximizes data utility |

| Nested Cross-Validation | Unbiased hyperparameter tuning and validation | Model selection with small samples | Prevents optimistic performance estimates |

| SMOTE + RENN Pipeline | Hybrid resampling for class imbalance | Medical datasets with rare outcomes | Improves sensitivity, reduces variance |

| RE-SMOTEBoost | Advanced ensemble resampling | Combined imbalance and class overlap | Double pruning enhances boundary capture |

| Structural Similarity Score (SSS) | Synthetic data quality assessment | AI-generated synthetic datasets | Validates fidelity to original data distribution |

| Competing Risks Analysis | Accurate time-to-event estimation | Survival data with multiple event types | Prevents biased cause-specific risk estimates |

Based on comparative performance data, researchers can strategically select methodologies based on their specific data challenges:

For small sample sizes (n<100), k-fold cross-validation and nested cross-validation provide the most stable performance, with k-fold being computationally more efficient for initial experiments. When sample sizes exceed 500, the 0.632+ bootstrap method becomes increasingly viable.

For imbalanced classes, the hybrid SMOTE+RENN approach with LightGBM classifiers delivers superior sensitivity for highly imbalanced scenarios (imbalance ratio >1:10), while RE-SMOTEBoost offers additional benefits when class overlap is suspected.

For censored data, the ignore approach for excluded components (like SPNBCs in IBCFS) is recommended for estimating total treatment effects in most clinical trials, with competing risks analysis reserved for high-risk scenarios.

The most robust cancer prediction models will integrate multiple strategies—perhaps combining synthetic data generation for class imbalance with nested cross-validation for small samples—tailored to their specific data limitations and clinical questions. This comparative analysis demonstrates that methodological choices in addressing data challenges significantly impact model performance, reinforcing their critical role within a comprehensive cross-validation strategy for cancer prediction research.

Implementing Cross-Validation for Genomic and Clinical Data

In the field of oncology research, the development of robust and generalizable machine learning models is paramount for accurate cancer prediction and diagnosis. Cross-validation (CV) stands as a critical methodology for reliably estimating model performance, particularly when working with high-dimensional biological data such as genomics, transcriptomics, and histopathological imaging. The core principle of cross-validation involves partitioning a dataset into complementary subsets, performing model training on one subset (training set), and validating the model on the other subset (validation or test set). This process mitigates the risk of overfitting and provides a more realistic assessment of how the model will perform on unseen data. In cancer research, where datasets are often characterized by limited sample sizes alongside a vast number of features (e.g., gene expression data from RNA sequencing), rigorous validation is indispensable for developing trustworthy predictive models [13] [9].

Two predominant cross-validation approaches are K-Fold Cross-Validation and its enhanced variant, Stratified K-Fold Cross-Validation. The fundamental distinction between them lies in how the data is partitioned. Standard KFold divides the data into k consecutive folds after potentially shuffling the data, whereas StratifiedKFold ensures that each fold preserves the percentage of samples for each target class [27] [28]. This preservation of class balance is especially crucial in medical datasets, which frequently exhibit inherent class imbalances, such as a higher proportion of healthy control samples compared to cancer-positive cases. The choice between these two validation strategies can significantly impact performance estimates and, consequently, the perceived success of a cancer prediction model [29] [30].

Theoretical Foundations and Key Differences

K-Fold Cross-Validation

K-Fold Cross-Validation is a foundational resampling technique used to evaluate machine learning models. The procedure is systematic:

- The entire dataset is randomly shuffled (optional but recommended if the data has an inherent order).

- The shuffled dataset is split into k mutually exclusive subsets (folds) of approximately equal size.

- For each of the k iterations, a single fold is retained as the validation data, and the remaining k-1 folds are used as training data.

- The model is trained on the training set and evaluated on the validation set. The performance metric (e.g., accuracy) is recorded.

- After k iterations, the average of the k performance metrics is reported as the overall performance estimate.

A significant characteristic of this method is that each data point appears in the test set exactly once [31]. While KFold is a robust method, its primary drawback emerges with imbalanced datasets: a random partitioning may result in one or more folds having very few or even zero instances of a minority class. This can lead to unreliable performance estimates, as the model cannot be adequately trained or evaluated on underrepresented classes [27] [30].

Stratified K-Fold Cross-Validation

Stratified K-Fold Cross-Validation is an enhancement of the standard KFold method, specifically designed for classification problems. It employs a stratification process, which rearranges the data to ensure that each fold is a good representative of the whole by preserving the original class distribution [28] [30].

For example, consider a binary classification dataset for cancer detection (Class 0: "No Cancer," Class 1: "Cancer") with 100 samples, where 80% are Class 0 and 20% are Class 1. In a 5-fold stratified split, each fold would contain roughly 16 Class 0 samples (80% of the fold size of 20) and 4 Class 1 samples (20% of the fold size of 20). This is in contrast to standard KFold, where a fold might, by chance, contain only 1 or 2 Class 1 samples, or even none at all [30].

This method is widely recommended for classification tasks because it produces more reliable performance estimates, with lower bias and variance compared to regular cross-validation, especially in the presence of class imbalance [28]. Research has demonstrated that stratification is generally a better scheme for accuracy estimation and model selection [28].

Comparative Workflow

The diagram below illustrates the logical sequence and key difference in the splitting mechanism between the two cross-validation strategies.

Figure 1: A comparative workflow of K-Fold and Stratified K-Fold cross-validation, highlighting the key difference in how folds are created.

Experimental Protocols and Performance in Cancer Research

The practical implications of choosing a cross-validation strategy are evident in various cancer prediction studies. Researchers routinely employ these methods to validate models built on diverse data types, from genomic sequences to clinical images.

Experimental Protocol for Model Validation

A standard protocol for implementing these methods in a cancer classification study involves several key steps, as exemplified by research on predicting cervical cancer and classifying multiple cancer types from RNA-seq data [13] [29] [5]:

- Data Preprocessing: This includes handling missing values, outlier removal, and feature scaling. For instance, in a study classifying five cancer types from RNA-seq data, features were scaled using

StandardScalerto normalize the data [5]. - Feature Selection: Given the high dimensionality of omics data, techniques like Lasso (L1 regularization) and Ridge Regression (L2 regularization) are often used to identify the most significant genes or features. Lasso is particularly favored for its ability to drive some coefficients to zero, performing automatic feature selection [13].

- Model Training with Cross-Validation: The dataset is partitioned using either KFold or StratifiedKFold. A common practice is to use a 5-fold or 10-fold setup.

- For a 5-fold CV, the data is split into 5 subsets. The model is trained on 4 subsets (80% of the data) and validated on the remaining 1 subset (20% of the data). This process is repeated 5 times so that each subset serves as the validation set once [13].

- In Stratified K-Fold, this splitting is done while maintaining the original class proportions in each fold [29].

- Performance Aggregation: The performance metrics (e.g., accuracy, precision, recall, F1-score) from each of the k iterations are averaged to produce a single estimate. This average provides a more robust measure of the model's predictive power than a single train-test split [30].

Quantitative Comparison in Research Studies

The table below summarizes the use of cross-validation strategies in recent cancer prediction studies, highlighting their application and resulting performance.

Table 1: Application of Cross-Validation in Recent Cancer Prediction Studies

| Cancer Type / Focus | Data Modality | Validation Strategy | Key Reported Performance | Citation |

|---|---|---|---|---|

| Multiple Cancers (BRCA, KIRC, etc.) | RNA-seq Gene Expression | 5-Fold Cross-Validation | Support Vector Machine achieved 99.87% accuracy. | [13] |

| Cervical Cancer | Clinical Risk Factors | Stratified K-Fold Cross-Validation | Random Forest classifier was identified as a good alternative for early classification. | [29] |

| Cervical Cancer | Diagnostic Images | Stratified K-Fold Cross-Validation | Assisted in evaluating ML models (SVM, RF, etc.) for predicting four common diagnostic tests. | [29] |

| Colon & Lung Cancer | Histopathological Images | 10-Fold Cross-Validation | Used to evaluate a novel LBP method, achieving accuracies up to 96.87%. | [32] |

| Head & Neck Carcinoma | Transcriptomic & Clinical | K-Fold & Nested CV | K-fold CV demonstrated greater stability for internal validation in high-dimensional settings. | [9] |

The consensus from contemporary research is that Stratified K-Fold is the preferred method for classification tasks, including cancer type prediction from genomic or clinical data [13] [29]. Its ability to maintain class distribution across folds prevents scenarios where a fold contains no examples of a rare cancer type, which could lead to overly optimistic or unstable performance estimates. However, for regression problems, such as predicting a continuous outcome like patient survival time, the standard K-Fold approach remains appropriate [28].

Implementing robust cross-validation requires specific computational tools and libraries. The following table details key resources commonly used in cancer prediction research.

Table 2: Essential Research Reagents and Computational Tools for Cross-Validation

| Tool / Solution | Function | Relevance to Cancer Prediction Research | |

|---|---|---|---|

| Scikit-learn (Python) | A comprehensive machine learning library. | Provides the KFold and StratifiedKFold classes for easy implementation of cross-validation, along with numerous algorithms and metrics. |

[27] [30] |

| Lasso (L1) Regression | A feature selection and regularization method. | Identifies the most significant genes from high-dimensional transcriptomic data by shrinking less important coefficients to zero. | [13] |

| StratifiedShuffleSplit | An alternative to StratifiedKFold for repeated random splits. | Useful when a specific test set size is required or for a Monte Carlo-style evaluation, though test sets may overlap. | [31] |

| SHAP (SHapley Additive exPlanations) | An Explainable AI (XAI) technique. | Interprets model predictions by quantifying the contribution of each feature (e.g., a specific gene or clinical variable) to the final output. | [5] [33] |

| R Software / Environment | A programming language for statistical computing. | Widely used for survival analysis and handling high-dimensional omics data, with packages available for various validation methods. | [9] |

The choice between standard and stratified k-fold cross-validation is not merely a technicality but a critical decision that affects the validity of a cancer prediction model. The experimental evidence and theoretical underpinnings strongly support the use of Stratified K-Fold Cross-Validation for all classification tasks, which constitute the majority of cancer prediction problems (e.g., cancer vs. normal, or multi-class cancer typing). It should be the default choice for any imbalanced dataset, ensuring that performance metrics are not skewed by unrepresentative folds.

Conversely, standard K-Fold Cross-Validation remains suitable for regression tasks, such as predicting continuous disease-free survival times, or in scenarios where the dataset is sufficiently large and the target variable is evenly distributed [28]. Furthermore, for data with a temporal component, such as longitudinal patient studies, specialized methods like time-series split are more appropriate than either KFold or StratifiedKFold [31].

In conclusion, within the critical context of cancer research, adopting Stratified K-Fold Cross-Validation is a simple yet powerful step toward developing more reliable, generalizable, and clinically relevant predictive models. It provides researchers with a more trustworthy estimate of how their model will perform in a real-world setting, where dealing with imbalanced class distributions is the norm rather than the exception.

Predictive models using high-dimensional data, such as genomics and transcriptomics, are increasingly used in oncology for time-to-event endpoints like disease-free survival and treatment response [4] [9]. In cancer prediction research, where models developed from molecular data (e.g., 15,000 transcriptomic features) must guide critical clinical decisions, validation strategies become paramount. Internal validation of these models is crucial to mitigate optimism bias prior to external validation, as standard approaches like simple train-test splits can yield overly optimistic performance estimates that fail to generalize to new patient cohorts [4] [9].

The fundamental challenge stems from a methodological flaw: when hyperparameter tuning and performance evaluation are performed on the same data subsets, information "leaks" into the model, creating selection bias and overfitting [34] [35]. This problem is particularly acute in high-dimensional settings where the number of features (p) vastly exceeds the number of samples (n), a common scenario in transcriptomic analysis of tumor samples [4]. Nested cross-validation addresses this vulnerability through a rigorous separation of model selection and model evaluation processes.

Understanding the Nested Cross-Validation Architecture

Nested cross-validation (CV) employs two layers of data partitioning: an inner loop for hyperparameter optimization and model selection, and an outer loop for performance estimation of the selected model. This structure ensures that the test sets used for final evaluation remain completely untouched during the model tuning process, providing an unbiased estimate of how the model will perform on truly independent data [34] [35].

The following diagram illustrates the complete nested cross-validation workflow:

In the architectural flow above, the outer loop systematically partitions the data into training and test folds, while the inner loop further divides each outer training fold to select optimal hyperparameters without ever exposing the outer test fold to the model selection process. This rigorous separation prevents the information leakage that plagues single-layer validation approaches [34] [35].

Comparative Analysis of Internal Validation Strategies for Cancer Models

Quantitative Performance Comparison Across Methods

Recent simulation studies using head and neck cancer transcriptomic data provide empirical evidence for comparing validation strategies. The study simulated datasets with clinical variables (age, sex, HPV status, TNM staging) and transcriptomic data (15,000 transcripts) for disease-free survival prediction, with sample sizes ranging from 50 to 1000 patients [4] [9]. Cox penalized regression was performed for model selection, with multiple validation strategies assessed for discriminative performance (time-dependent AUC and C-index) and calibration (3-year integrated Brier Score).

Table 1: Performance characteristics of internal validation methods in high-dimensional cancer prognosis

| Validation Method | Stability | Optimism Bias | Sample Size Efficiency | Computational Cost |

|---|---|---|---|---|

| Train-Test Split | Unstable, high variance [4] [9] | Moderate to high | Inefficient with limited data | Low |

| Conventional Bootstrap | Moderate | Over-optimistic, particularly with small samples [4] [9] | Moderate | Moderate |

| 0.632+ Bootstrap | Moderate | Overly pessimistic, particularly with small samples (n=50 to n=100) [4] [9] | Moderate | Moderate |

| K-Fold Cross-Validation | High stability with larger samples [4] [9] | Low bias | Efficient across sample sizes | Moderate |

| Nested Cross-Validation | High, though some fluctuations based on regularization [4] [9] | Lowest bias | Requires sufficient samples for reliability | High |

Detailed Methodological Comparison

Train-Test Validation: Simple random splitting (e.g., 70% training, 30% testing) demonstrates unstable performance with high variance across different random splits, making it unreliable for model evaluation in resource-limited settings [4] [9].

Bootstrap Methods: Conventional bootstrap approaches demonstrate significant optimism bias, overestimating model performance, while the 0.632+ bootstrap correction swings to the opposite extreme, becoming overly pessimistic particularly with small sample sizes (n=50 to n=100) common in preliminary cancer studies [4] [9].

Standard K-Fold Cross-Validation: This approach strikes a reasonable balance between bias and variance, showing improved stability with larger sample sizes. However, when used for both hyperparameter tuning and performance estimation, it remains vulnerable to optimism bias as the same data informs both model selection and evaluation [36].

Nested Cross-Validation: By completely separating the hyperparameter optimization phase (inner loop) from the performance estimation phase (outer loop), nested CV provides the least biased estimate of true generalization error, making it particularly valuable for assessing model viability before proceeding to expensive external validation studies [4] [34] [9].

Experimental Evidence: A Simulation Study in Head and Neck Cancer

Methodology and Experimental Protocol

A rigorous simulation study provides concrete evidence for comparing validation strategies in high-dimensional time-to-event settings relevant to cancer prediction [4] [9]. The experimental protocol was designed as follows:

Data Generation: Datasets of varying sample sizes (50, 75, 100, 500, and 1000) were simulated with 100 replicates per scenario, inspired by the SCANDARE head and neck cohort (NCT03017573). Simulated data included clinical variables (age, sex, HPV status, TNM staging) and transcriptomic data (15,000 transcripts) with disease-free survival as the endpoint [9].

Model Development: Cox penalized regression (LASSO, elastic net) was performed for model selection, accounting for the high-dimensional feature space [4] [9].

Validation Strategies Compared: The study compared train-test (70% training), bootstrap (100 iterations), 5-fold cross-validation, and nested cross-validation (5×5) to assess discriminative performance (time-dependent AUC and C-index) and calibration (3-year integrated Brier Score) [4] [9].

Evaluation Metrics: Performance was assessed using discrimination metrics (C-index, time-dependent AUC) that measure the model's ability to separate patients with different outcomes, and calibration metrics (integrated Brier Score) that assess the agreement between predicted and observed event rates [4].

Key Findings and Quantitative Results

The simulation results demonstrated clear differences in validation performance across methods and sample sizes:

Table 2: Simulation results for internal validation methods across sample sizes